Node-RED flows for Docker

In this post I’m continuing to play with node-RED, this time I wanna to test Node-RED on Docker container and I would like to create a first flow using Weather Company APIs.

The Docker is a way to package and distribute software, it is a container technology that makes it easy to package software, along with all its dependencies, and ship it to the developer across the room, to staging or production, or wherever it needs to run.

Docker is faster than starting a virtual machine (milliseconds vs. minutes), if you want to run multiple jobs on a single server, the traditional approach would be carve it up into virtual machines and use each VM to run one job. But VMs are slow to start, given that they must boot an entire operating system, which can take minutes. They’re also resource intensive, as each VM has to run a full OS instance.

Containers offer some of this same behavior but are much faster, because starting a container is like starting a process. Docker containers also require much less overhead — really no more expensive than a process. Containers, however, use shared operating systems. That means they are much more efficient than hypervisors in system resource terms. Instead of virtualizing hardware, containers rest on top of a single Linux instance.

From my point of view one of the big advantages of Docker is the ability to run any platform with its own config on top of your infrastructure, The same Docker configuration can also be used in a variety of environments, I can run my Node-RED flows across multiple IaaS/PaaS without any extra tweaks. Every provider from AWS to Google, from MS Azure to IBM Bluemix supports Docker.

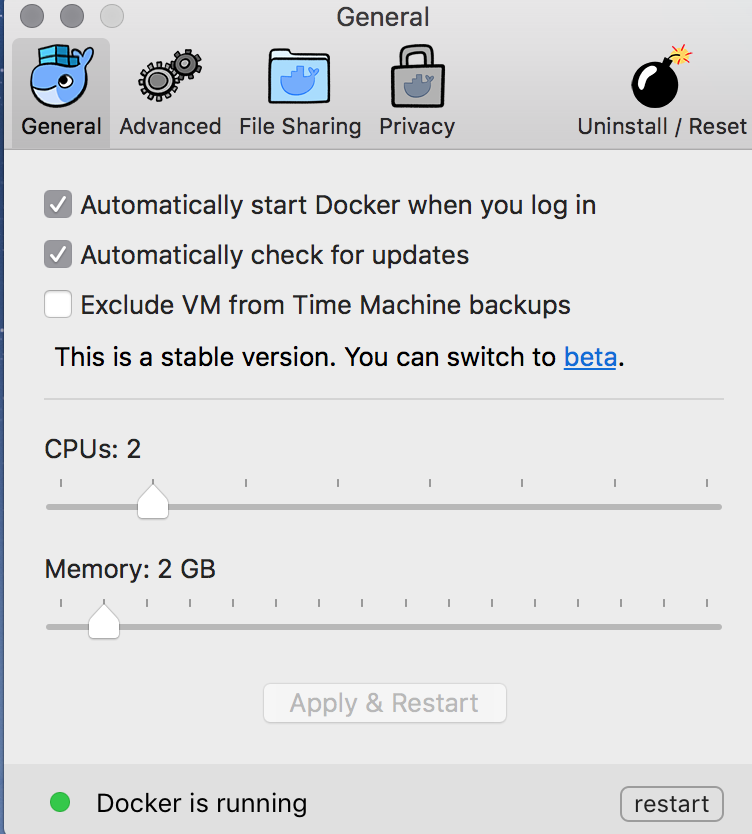

Since Docker runs everywhere I installed Docker on my wonderful MacBook Pro to play with Node-RED 🙂

By the shell command:

docker run -it -p 1880:1880 --name mnnodered nodered/node-red-docker

I installed a Docker instance with the name mnnodered with the port 1880 open, and the nodered/node-red-docker container should be download from DockerHub.

To add additional nodes, as Watson IoT nodes, I opened a shell into the container and I run the npm command:

| => docker exec -it mnnodered /bin/bash node-red@621ef5a54ede:~$ cd /data/ node-red@621ef5a54ede:/data$ npm install node-red-contrib-ibm-watson-iot

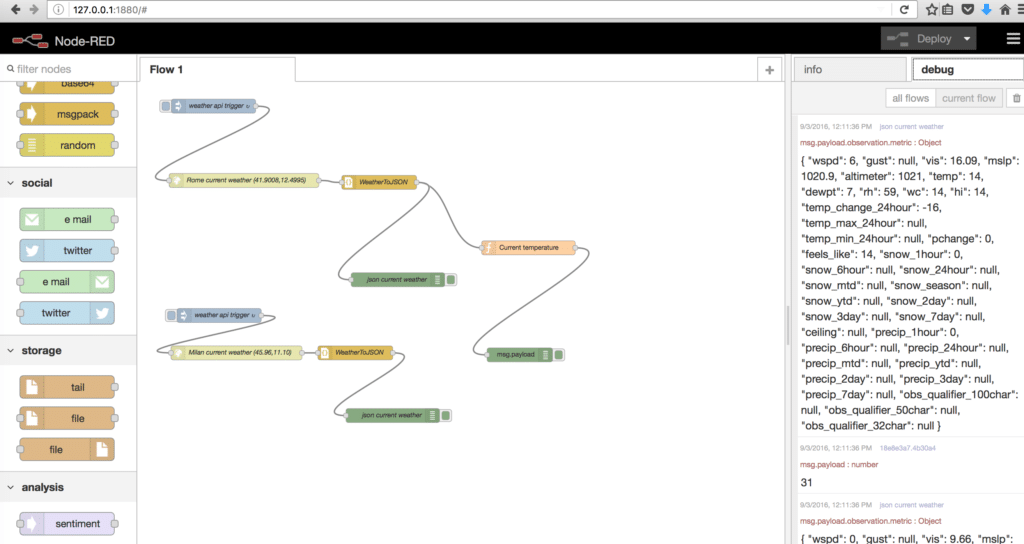

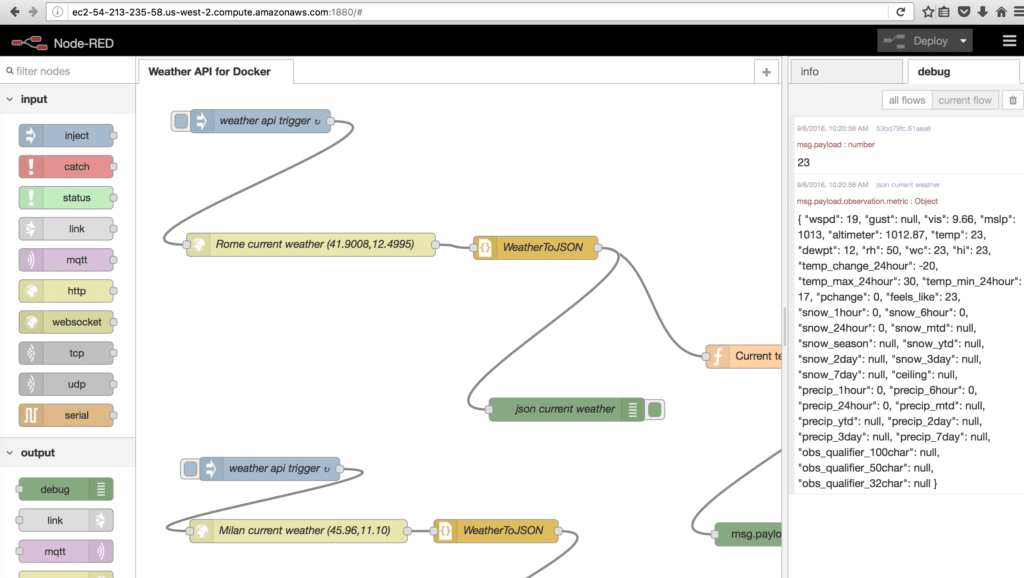

Node-RED runs on localhost under Docker and of course I used the Weather Company service on Bluemix:

Let’s rock and roll exporting Docker image:

| => docker export --output="NodeREDWeatherAPI.tar" mnnodered

As first test I imported my Docker image on my Amazon EC2 machine, see the previous post for AWS details, with the following command:

cat /home/ubuntu/NodeREDWeatherAPI.tar | docker import - mynodered:latest

then I run the container:

docker run -it -p 1880:1880 mynodered node /usr/src/node-red/node_modules/node-red/red.js /data/flows.json "--userDir" "/data"

Node-RED runs on AWS EC2 after imported my Docker container, of course I used the Weather Company service on Bluemix:

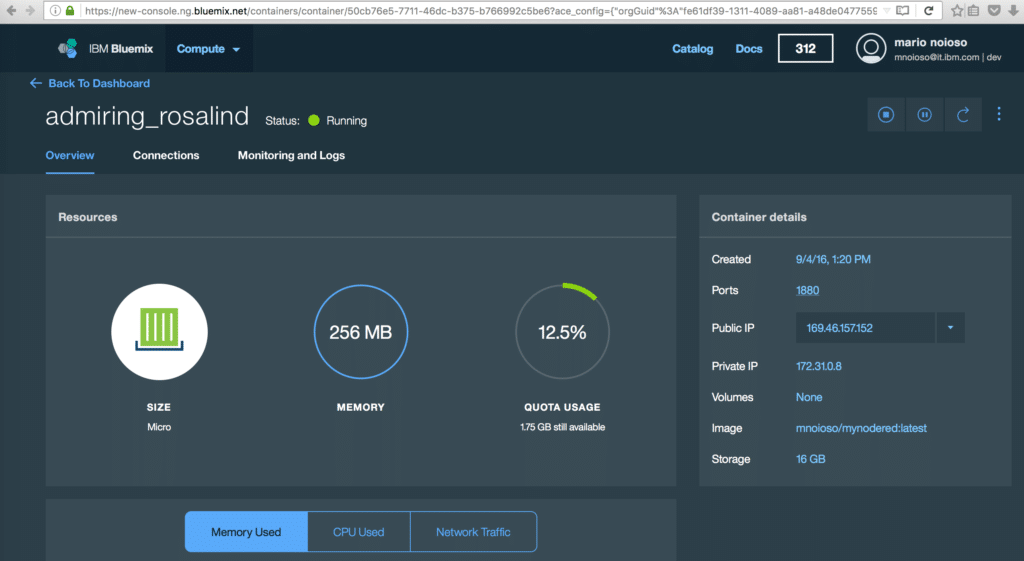

As second test I used IBM Containers to run Docker containers in a hosted cloud environment on Bluemix. On Bluemix the containers are virtual software objects that include all of the elements that an app needs to run. A container has the benefits of resource isolation and allocation, but is more portable and efficient than, for example, a virtual machine.

By Bluemix catalog I created a container with a public IP and I opened the standard 1880 port:

to upload my container on Bluemix I set up the IBM Containers plug-in (cf ic) to use the native Docker CLI.

You can read the official documentation to install the IBM Containers plug-in (cf ic) to run native Docker CLI commands to manage your containers. So I pushed my local container to my private Bluemix registry from the command line

| ~/Downloads @ redline (mnoioso) | => docker tag 2b56e7edab9e registry.ng.bluemix.net/mnoioso/mynodered ________________________________________________________________________________ | ~/Downloads @ redline (mnoioso) | => docker push registry.ng.bluemix.net/mnoioso/mynodered The push refers to a repository [registry.ng.bluemix.net/mnoioso/mynodered] fe5d3148da40: Pushed 5f70bf18a086: Pushed ... latest: digest: sha256:041f1deb123f8a4fec6a3b99e3c9ad88f0cd371a8cfd7b12f477233991c6ac63 size: 13457

and nothing else 🙂

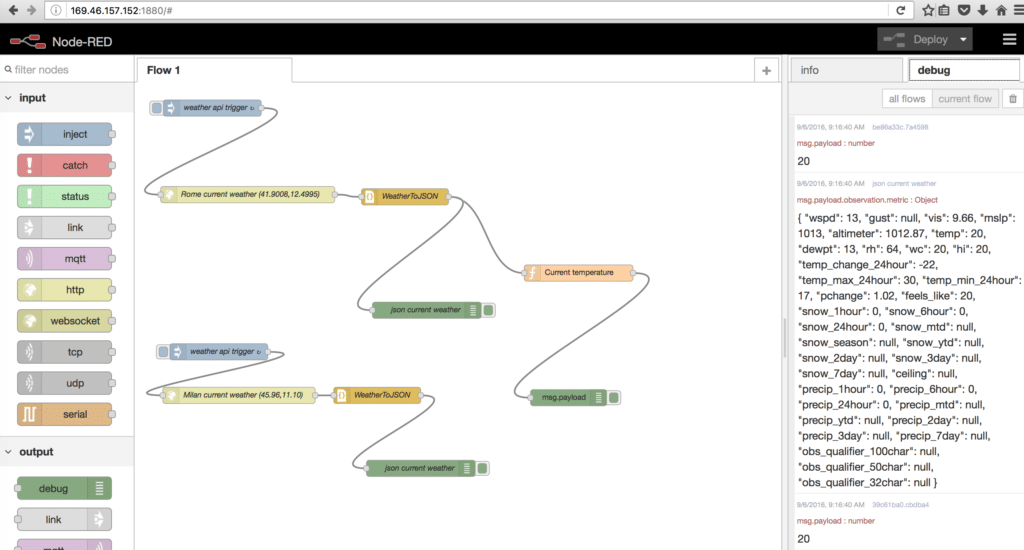

Here is my Node-RED flow, I simply clicked on the public address associated to the container:

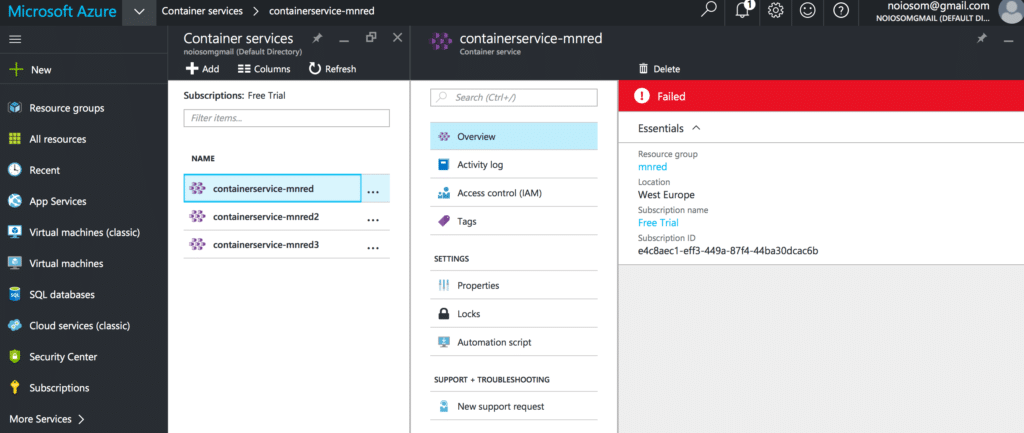

I wanted to try the Azure container service:

but I reached the service limits for my free account 🙂

In my opinion, the Docker experience it’s very positive. The Docker container has simply and fast configuration, users can take their own configuration, put it into code and deploy it in a wide variety of environments, the requirements of the infrastructure are no longer linked with the environment of the application. The deployment it’s very rapid, in the past, bringing up new hardware used to take days, the appearance of VMs took this timeframe down to minutes, Docker manages to reduce deployment to mere seconds, this is due to the fact that it creates a container for every process and does not boot an OS.

From Node-RED point of view could be considered the Docker container as a way to migrate Node-RED flows from one of our competitors platforms to IBM platform.

Enjoy !

One Comment