Mood music station based on emotions and Spotify

Music can have a profound effect on the human psyche.

Music is undeniably important in shaping moods, and, likewise, certain frames of mind require certain kinds of songs. There are several websites with feature mood searches, they are able to filter songs by emotions and activities sites for finding the perfect songs to suit your mood and there are several apps that are able to propose playlists based on your mood, included Spotify.

In this post I combine my passion for music and for cognitive solution together to pursue a funny and innovative scenario:

choose a music track based on emotions detected on your face

My idea is to recognize emotions in an images, from a facial expression in an image as in input, for example taking pictures with our smartphone camera, and choose a music track based on the emotions detected on the face. For example if you are angered or sad my algorithm could suggest you a rock music track or if you are happy or surprised a very cool jazz track could be great for you. Of course the algorithm that map the emotions with the music genre could change in any way you prefer, the core of scenario is to introduce the feature to associate the music based on your emotions.

To implement this proof of concept I used the Microsoft Cognitive Service Emotion API , the api takes a facial expression in an image as an input, and returns the confidence across a set of emotions for each face in the image. The emotions detected are anger, contempt, disgust, fear, happiness, neutral, sadness, and surprise. These emotions are understood to be cross-culturally and universally communicated with particular facial expressions.

Based on emotions I used the Spotify Web API to search data from the Spotify music catalog, for example for anger and sadness emotions I search through the catalog a rock music track tagged as hipster, for a happiness emotion a hip hop track could be fine, for a neutral sentiment a Miles Davis’s track it is a good choice 🙂

The Spotify Web API endpoints return metadata in json format about artists, albums, and tracks directly from the Spotify catalogue. The API also provides access to user-related data such as playlists and music saved in a “Your Music” library, subject to user’s authorization. Many endpoints are open and yours do not need any special permissions to access them.

Here is a small list of APIs that I used for my mash-up:

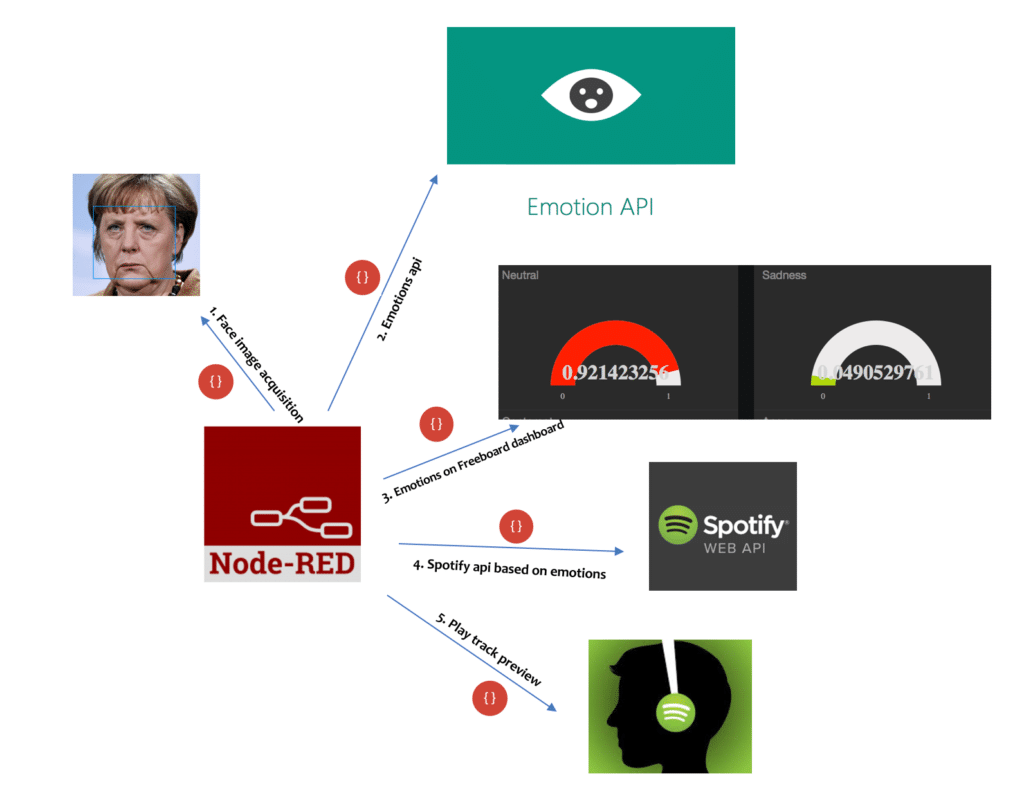

I used node-RED to build a flow for my scenario, here is a hi- level conceptual schema:

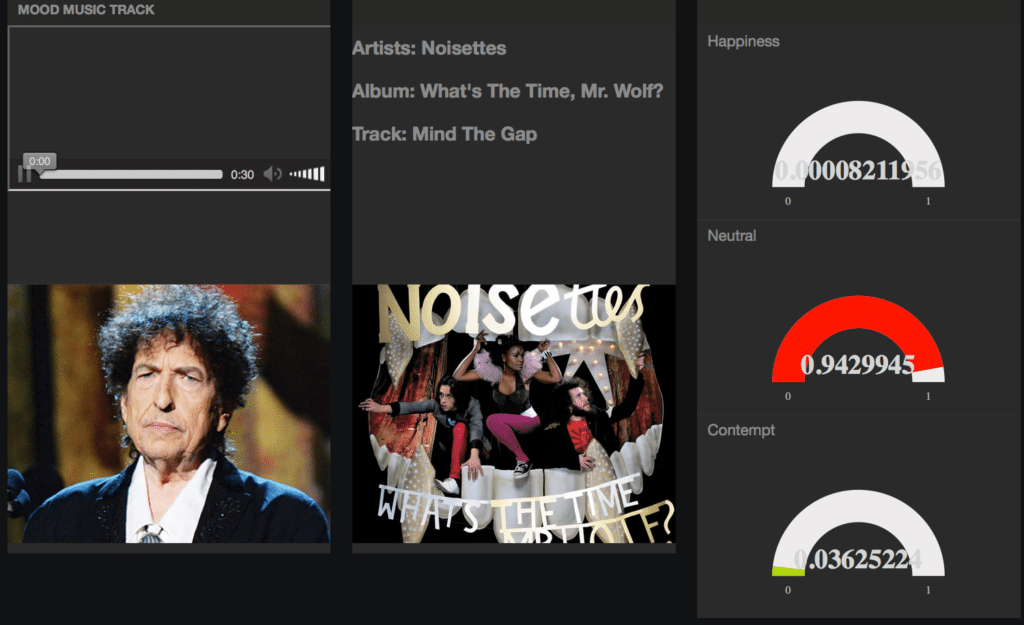

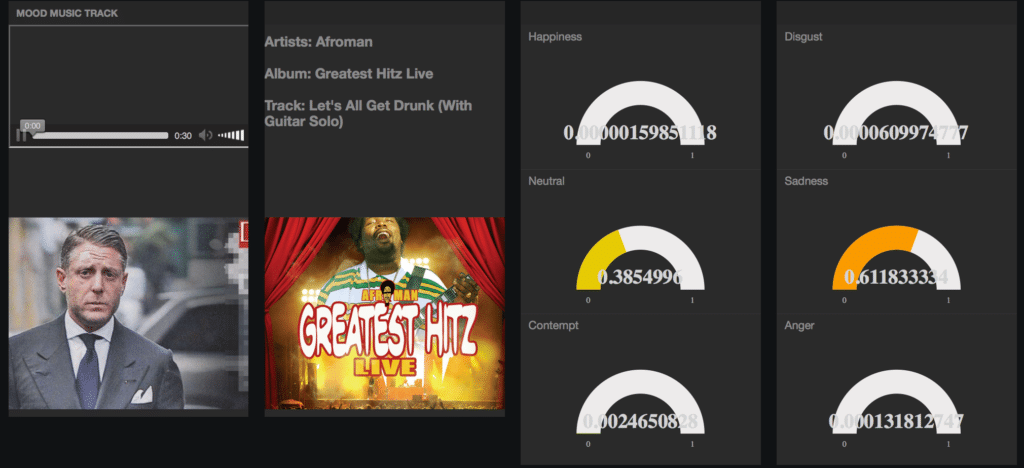

In used my preferred web dashboard, the freeboard, to display data in real-time: the face image acquisition, the emotions detected and to play a 30 second preview (MP3 format) of the track.

Each emotions numeric value detected on the face value is displayed as a gauge on my dashboard.

Here is the neutral emotion detected on Bob Dylan‘s face and music suggested is a Noisettes‘s track:

Here is the sadness emotion on the Lapo Elkann‘s face and the music suggested is a glad Afroman‘s track:

So enjoy with my Mood music station based on emotions and Spotify 🙂

I have noticed you don’t monetize marionoioso.com, don’t waste your

traffic, you can earn additional bucks every month with new monetization method.

This is the best adsense alternative for any type of website (they approve all sites), for more info simply

search in gooogle: murgrabia’s tools

Can U please share the code.?