Do you remember when Orange was the New Black? Now AI is everywhere. Every article has AI in the title, every product is powered by AI, every solution is based on an artificial intelligence capability, every friend, collegue, partner, customer talk about AI.

AI is not new, I had the pleasure of studying Artificial Intelligence and Expert System at the university in the mid 90′, and the 1956 Dartmouth Artificial Intelligence conference gave birth to the field of AI. So why suddenly does it matter? Two forces are driving that, the exponential rise in computer processing power as predicted by Moore’s Law and the new era of big data.

By this Q&A format article I would like to express my view on the matter.

1. What is Artificial Intelligence (AI) ?

According wikipedia the Artificial intelligence (AI) is intelligence exhibited by machines.

Colloquially, the term “artificial intelligence” is applied when a machine mimics “cognitive” functions that humans associate with other human minds, such as “learning” and “problem solving” known as Machine Learning. The field was founded on the claim that human intelligence “can be so precisely described that a machine can be made to simulate it”.

Capabilities currently classified as AI include successfully understanding:

human speech

competing at a high level in strategic game systems

self-driving cars

intelligent routing in content delivery networks

interpreting complex data

So AI is a broad topic, it ranges from your Cortana personal assistant on your smartphone to self-driving cars to something in the future that might change the world dramatically.

It is very important to understand that there are two major thesis to think about the artificial intelligence:

“Weak AI thesis”: the weak AI is AI that specializes in one area. There’s AI that can beat the world chess champion in chess, but that’s the only thing it does. The weak AI includes machine learning (developing and improving algorithms that help computers learn from data to create more advanced, intelligent computer systems), human language technologies (linking language to the world through speech recognition, language modeling, language understanding, spoken language systems, and dialog systems), perception and sensing (making computers and devices understand what they see to enable tasks ranging from autonomous driving to analysis of medical images) tools and platforms (chatbots that incorporate contextual data to augment and enrich human reasoning) integrative intelligence (computer vision and human language technologies to create end-to-end systems that learn from data and experience), cyberphysical systems and robotics (developing formal methods to ensure the integrity of drones, assistive robotics and other intelligent technologies that interact with the physical world) and many other cases.

“Strong AI thesis”: the strong AI that refers to a computer that is as smart as a human, machine that can perform any intellectual task that a human being can. It includes mental capability that, among other things, involves the ability to reason, solve problems, think abstractly, comprehend complex ideas, learn quickly, and learn from experience. The strong AI is intuition. The aim of strong Al is to get at what is happening when one’s mind silently and invisibly chooses, from a myriad alternatives, which one makes most sense in a very complex situation. In many real-life situations, deductive reasoning is inappropriate, not because it would give wrong answers, but because there are too many correct but irrelevant statements which can be made; there are just too many things to take into account simultaneously for reasoning alone to be sufficient. The strong AI would be able to do all of those things as easily as you can.

Of course we are currently using the Weak AI.

2. How to creating strong AI ?

No one really knows how to make it, there are the most common strategies:

Duplicate the brain: the science world is working hard on reverse engineering the brain. Once we do that, we’ll know all the secrets of how the brain runs so powerfully and efficiently and we can draw inspiration from it and take its innovations. One example of computer architecture that mimics the brain is the artificial neural network. It starts out as a network of transistor “neurons” connected to each other with inputs and outputs. The way it learns is it tries to do a task, say handwriting recognition, and at first, its neural firings and subsequent guesses at deciphering each letter will be completely random. But when it’s told it got something right, the transistor connections in the firing pathways that happened to create that answer are strengthened; when it’s told it was wrong, those pathways’ connections are weakened. After a lot of this trial and feedback, the network has, by itself, formed smart neural pathways and the machine has become optimized for the task. The brain learns a bit like this but in a more sophisticated way, and as we continue to study the brain, we’re discovering ingenious new ways to take advantage of neural circuitry.

Brain emulation: where the goal is to slice a real brain into thin layers, scan each one, use software to assemble an accurate reconstructed 3-D model, and then implement the model on a powerful computer.

Using the computer to resolve the problem: the idea is that to build a computer whose two major skills would be doing research on AI and coding changes into itself allowing it to not only learn but to improve its own architecture, their main job is to understand how to make themselves smarter. It might be the most promising method.

3. Is AI a new technology ?

No, not…not really. I studied Artificial Intelligence and Expert System at college in the mid 90s and the AI was already on. Much of its theoretical and technological underpinning was developed over the past 70 years. Considered the first serious proposal in the philosophy of AI, Alan Turing published a paper in 1950 where he devised his famous Turing Test, designed to be a rudimentary way of determining whether or not a computer counts as intelligent. The term AI was coined by American computer scientist John McCarthy at the 1956 Dartmouth Conference, widely considered the birthplace of the discipline. Known as the father of AI, McCarthy created the Lisp computer language in 1958, which became the standard AI programming language and continues to be used today.

You can find a brief history here.

4. What are the various areas where AI can be used ?

We are living in a golden age of AI advances. Every day, it seems like computer scientists are making more progress in areas such as computer vision, deep learning, speech and natural language processing, areas of AI research that have challenged the field’s foremost experts for decades. The world’s largest technology companies hold the keys to some of the largest databases on our planet. Much like goods and coins before it, data is becoming an important currency for the modern world. The data’s value is rooted in its applications to artificial intelligence. Whichever company owns the data, effectively owns AI.

Companies like Facebook, Amazon, Google, IBM, Microsoft, DeepMind and Apple have a ton of data. These companies announced the launch of the new Partnership on AI. You can find more information here.

5. Why Microsoft decided to invest on AI ?

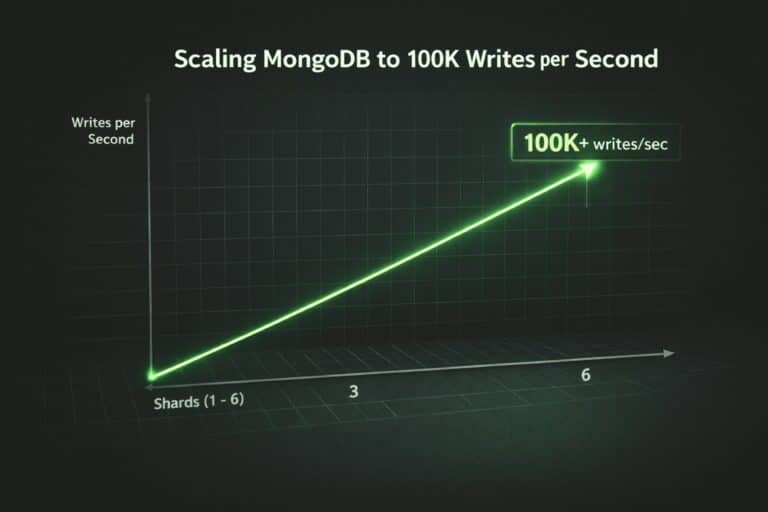

Microsoft has the talents, the vision, the data, the technologies and the resources to realize the AI, but also push the field forward. Microsoft has been investing in the promise of AI for more than 25 years and has a bold vision: to build systems that have true AI across agents, applications, services, and infrastructure. This vision is also inclusive: democratize AI so that everyone can realize its benefits.

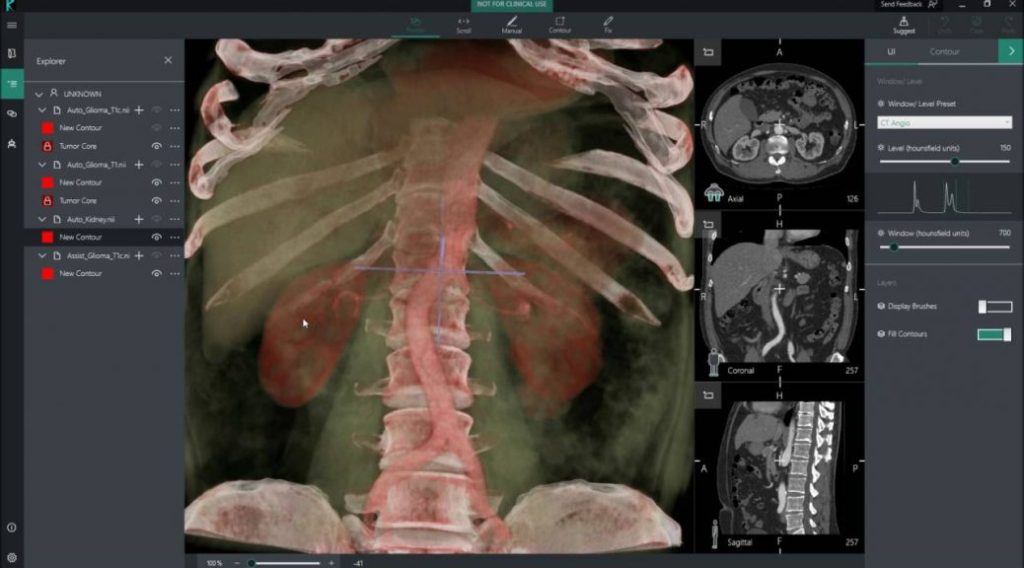

You can read the results of these efforts include Cortana, Bot Framework, Cognitive Services. xamples there are in healthcare industry with the application of cognitive services can help to clinical data analysis. A great example of modern intelligence capabilities in healthcare is the InnerEye project, it uses machine learning for medical imaging analysis to do things like quantify the size of tumors and identify which areas are most aggressive which are time consuming and difficult for health professionals to do

In the Public Safety sector the Situational Awareness solutions will change complete our concept of public security. The visual processing will locate and track individuals/vehicle/items of interest across a number of real-time video streams. So it is possible to create solutions where technology understand the real world, by making it intelligent and teaching it how to interact in more human and personal ways, thus facilitating the daily interactions of blind or partially sighted people.

[embedyt] https://www.youtube.com/watch?v=R2mC-NUAmMk[/embedyt]

6. What is the machine learning and deep learning ?

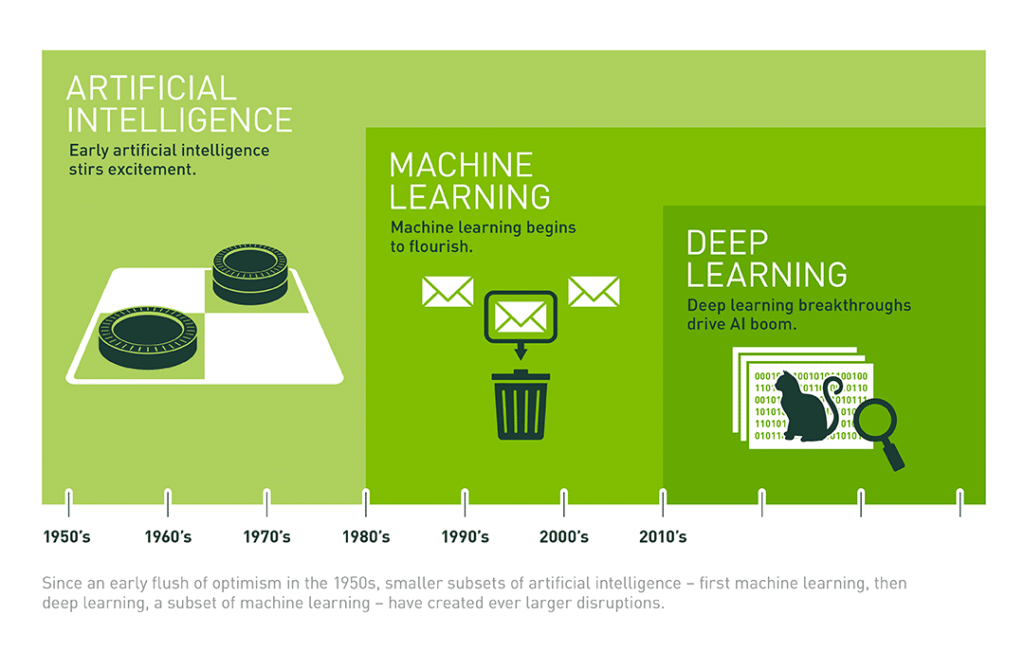

You can think of deep learning, machine learning and artificial intelligence as a set of Russian dolls nested within each other. Deep learning is a subset of machine learning, which is a subset of AI.

Machine learning is a data science technique that allows computers to use existing data to forecast future behaviors, outcomes, and trends. Using machine learning, computers learn without being explicitly programmed. Forecasts or predictions from machine learning can make apps and devices smarter. When you shop online, machine learning helps recommend other products you might like based on what you’ve purchased. When your credit card is swiped, machine learning compares the transaction to a database of transactions and helps detect fraud. When your robot vacuum cleaner vacuums a room, machine learning helps it decide whether the job is done.

Deep learning is data science technique based on learning data representations, as opposed to task-specific algorithms. Some representations are loosely based on interpretation of information processing and communication patterns in a biological nervous system, such as neural coding that attempts to define a relationship between various stimuli and associated neuronal responses in the brain. Deep learning architectures such as neural networks have been applied to fields including computer vision, speech recognition, natural language processing, social network filtering.

7. What about the AI’s risks ?

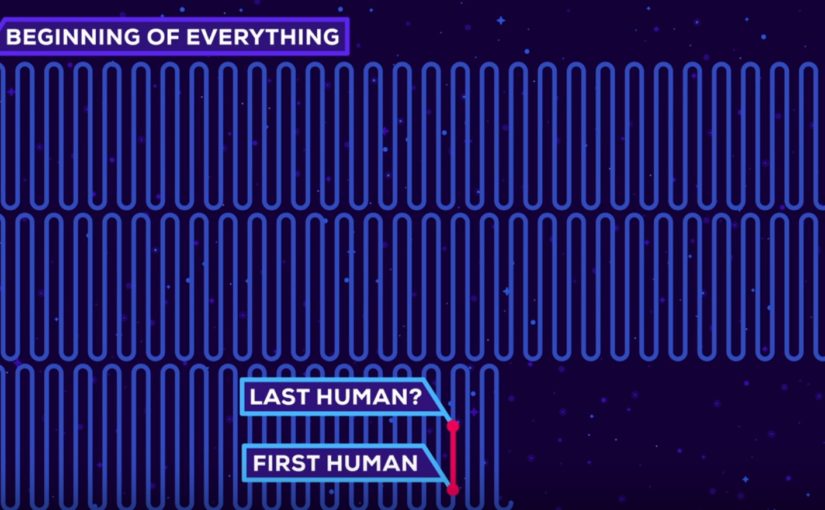

Stephen Hawking has warned that the creation of powerful artificial intelligence will be “either the best, or the worst thing, ever to happen to humanity”. Some people worry that developments in AI will bring about the singularity, the singularity is a point in history when AI will surpass human intelligence, leading to an unimaginable new scenarios.

I think that the singularity is still far in history considering that we still have no clear idea about how to implement a strong AI. I think that the concrete risks are reguarding ethical and legal issues around autonomous vehicles, automobiles, drones, etc. For example when accidents appear unavoidable, is the technology’s first obligation to protect its occupants as best it can? or to minimize total human harms? can the vehicles’s occupants to override the algorithm’s decision ? There are really many risks about the AI’s behaviors that can affect on human lives.

Other risks reguarding machine learning algorithms that can identify the best workers, the best football players, the best students and I think our lives could become a lot more complicated because of many life’s aspects that could be influenced by these predictions. Moreover an algorithm could predict if your marriage will last and based on big data and machine learning everyone will know how long you may live, the life expectancy, and everyone could use tool for applying game mechanics to non-game contexts (gamification) but in this case the no-game context could be the everyday life. Of course the game’s goal is to maximize the life expectancy by eating healthy, doing sports and so many other things in order to convince the prediction’s algorithm to give us a higher ranking.

This is a dystopian view of the future and it might be a Black Mirror‘s episode 🙂

Will we lose our job because of AI ? we are starting to see the effects of weak AI automating cognitive work, things we used to think only people could do, for example to visual inspection, customer support, medical dignosis and so on. History shows that every big revolution comes with a costs. The advent of new technology can be destabilizing for many people but in the long run, it also improves the society as a whole and raises the nation’s standard of living.

I believe that the new technology does more good than harm.