A Big Data architecture in data processing

I started my career as an IBM software engineer in 2000. Over the around 20 years it has been amazing to see how IT has been evolving to handle the ever growing amount of data, via technologies including relational database, data warehouse, ETL (Extraction, Transformation and Loading) and online analytical processing reporting, big data and now Cloud, IoT and AI.

The objective of this post is to analyze, first, the underlying principles on how to handle large amounts of data and, second, a thought process that I hope can help you get a deeper understanding of any emerging technologies in the data space and come up with the right architecture when riding on current and future technology waves.

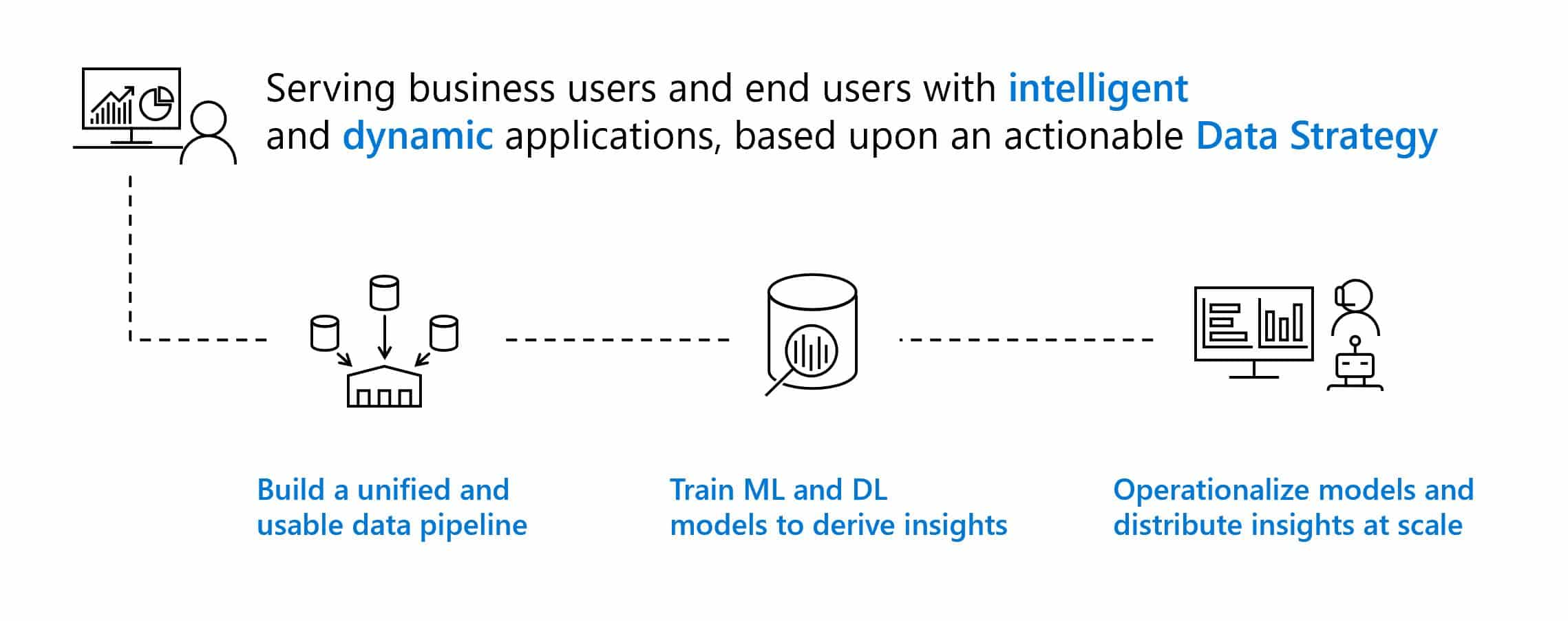

All organizations make decisions about how they engage with, operate on leveraging their data, companies that form a holistic point of view in adopting an enterprise-grade data strategy are well positioned to optimize their technology investments and lower their costs. Such a strategy treats data as an asset from which valuable insights can be derived, these insights can be used to gain a competitive advantage by being integrated into business operations.

How companies are transforming through Data:

Companies that embrace the constructs of a data strategy often define dedicated roles to own these strategies and policies. This ranges from augmenting executive staff and IT staff with roles such as chief data officer and chief data strategist, respectively, to expanding the responsibilities of traditional enterprise data architects.

Companies that embrace the constructs of a data strategy often define dedicated roles to own these strategies and policies. This ranges from augmenting executive staff and IT staff with roles such as chief data officer and chief data strategist, respectively, to expanding the responsibilities of traditional enterprise data architects.

A data strategy is a common reference of methods, services, architectures, usage patterns and procedures for acquiring, integrating, storing, securing, managing, monitoring, analyzing, consuming and operationalizing data. Any data strategy is based on a good big data architecture and a good architecture takes into account many key aspects:

- Design principles: foundational technical goals and guidance for all data solutions.

- Design patterns: high-level solution templates for common repeatable architecture modules (batch vs. stream, data lakes vs relation DB, etc.)

- Data usage patterns: solutions that share common functional and technical requirements, such as data discovery, data science or operational decision support.

- Services and tools rationalizations: which services/tools should be used to optimize the solution.

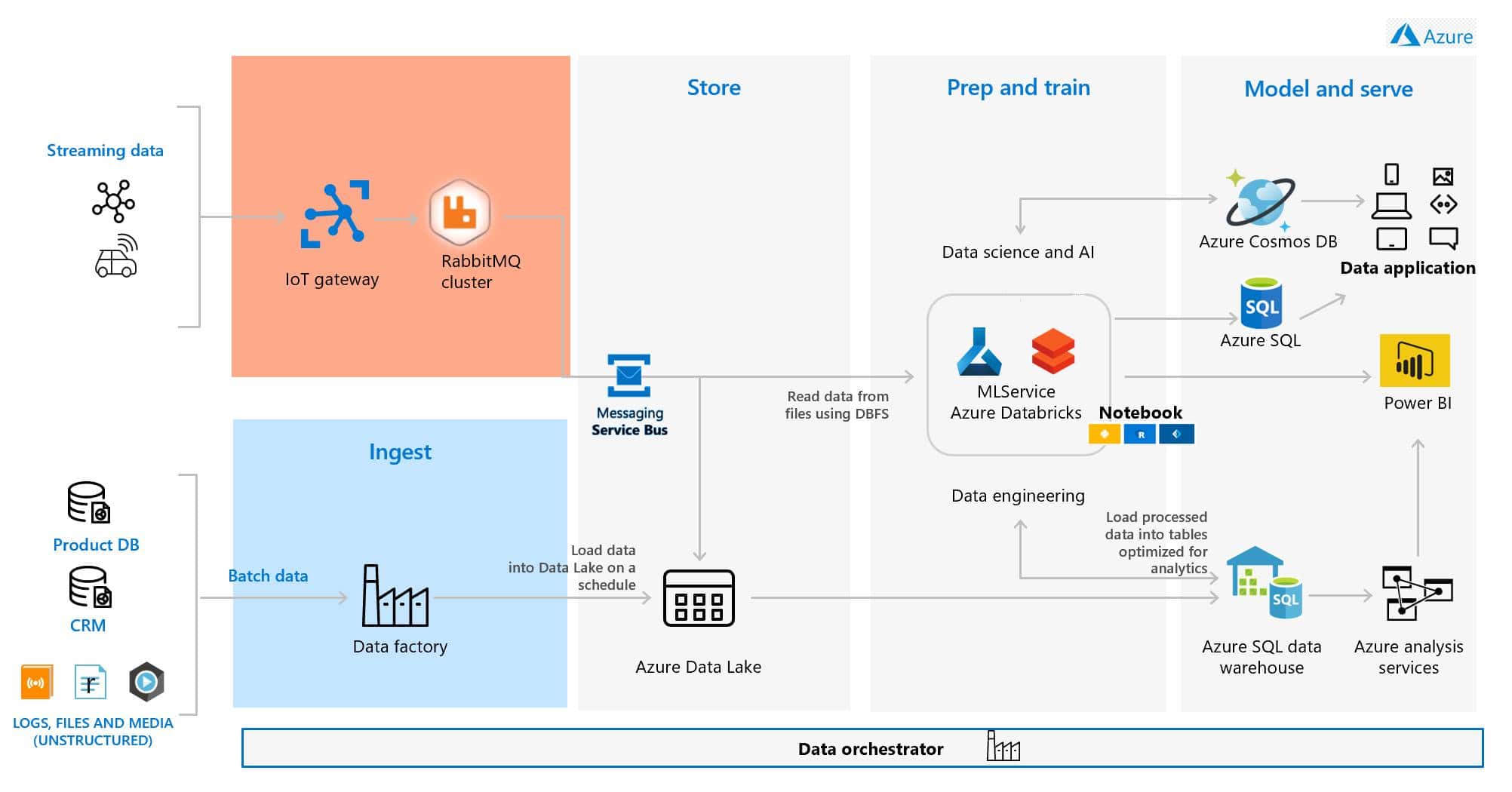

Obviously, an appropriate big data architecture design will play a fundamental role to meet the big data processing needs. Several reference architectures are now being proposed to support the design of big data systems, here is represented “one of the possible” architecture (Microsoft technology based)

The main goal is to processing of big data sources and to allow:

- Interactive exploration of big data

- Predictive analytics and machine learning

- Develop smart application based on data

Main blocks

Data Orchestration: many repeated data processing operations, encapsulated in workflows, that transform source data, move data between multiple sources and sinks, load the processed data into an analytical data store, or push the results straight to a report or dashboard. To automate these workflows, you can use an orchestration technology such Azure Data Factory.

Data storage: data for batch processing operations are stored in a distributed file store that can hold high volumes of large files in various formats, aka Data Lake

Data preparation: process data files using long-running batch jobs to filter, aggregate, and otherwise prepare the data for analysis. Using Java, Scala, or Python programs in Databricks (based on Spark cluster)

Analytical data store: data for analysis and then serve the processed data in a structured format that can be queried using analytical tools. The analytical data store used to serve these queries can be a Kimball-style relational data warehouse (read Inmon vs Kimball approach for DW) as seen in most traditional business intelligence (BI) solutions. Azure SQL Data Warehouse provides a managed service for large-scale, cloud-based data warehousing

Machine learning: In many cases, using a prebuilt model is just a matter of calling a web service or using an ML library to load an existing model. Some options include the web services provided by Azure Cognitive Services or pretrained neural network models provided by the Cognitive Toolkit. If a prebuilt model does not fit your data or your scenario, options in Azure include Azure Machine Learning.

Analysis and reporting: to empower users to analyze the data, the architecture includes a data modeling layer, such as a multidimensional OLAP cube in Azure Analysis Services. It might also support self-service BI like Power BI.

Combining the unstructured big data with structured data provides the most complete view for new smart business application, enabling you to use analytics that can yield valuable insights to aid in improving business processes. The previous architecture has been designed on five pillars:

- operational excellence

- security

- reliability

- performance

- cost optimization

Any pillar needs a specific post 🙂

stay tuned…