AI Tools, Agents, and the Future of Software Development

Why GenAI Is Not About Writing Code Faster, but About Understanding Systems Better

For most of the public conversation, Generative AI in software development has been framed as a productivity boost: faster coding, better autocomplete, automated tests. Useful improvements, but ultimately incremental.

What is far more interesting—and far more consequential—is happening elsewhere.

Across large enterprises, consultancies, and engineering organizations, GenAI is being applied to a much harder problem: understanding and evolving existing software systems. Systems that are large, long-lived, business-critical, and poorly documented. Systems where risk does not come from writing new code, but from misunderstanding old behavior.

A clear pattern is emerging across the industry:

GenAI is becoming a cognitive layer for software systems, not a replacement for developers.

This article synthesizes perspectives from five major players: Thoughtworks, Boston Consulting Group, Ernst & Young, IBM Consulting, and Xebia to outline how AI tools and agents are reshaping the future of software development.

From Code Generation to System Comprehension

The dominant narrative around GenAI has focused on forward engineering: generating code, tests, or documentation from prompts. But in real-world enterprise environments, the bottleneck is rarely code creation.

It is code comprehension.

Thoughtworks makes this explicit in its work on GenAI for modernization, where the core observation is that developers spend far more time reading and understanding code than writing it. Legacy systems encode business rules, constraints, and behaviors that often exist nowhere else — not in documentation, not in specifications, sometimes not even in people’s heads.

Their approach treats code as data.

Instead of feeding raw source files to an LLM, code is parsed into Abstract Syntax Trees, transformed into a knowledge graph, and enriched with explanations generated by a Large Language Model at multiple levels of abstraction: method, class, module, capability.

This architecture enables questions like:

- What business capability does this part of the system implement?

- Which rules are enforced here, and under what conditions?

- How does control actually flow across components?

This is not speculative. It is implemented in Thoughtworks’ internal accelerator, CodeConcise.

Reference:

https://martinfowler.com/articles/legacy-modernization-gen-ai.html

The crucial point: the LLM is not operating on text alone, but on structured representations of the system. This dramatically improves accuracy, reduces hallucination, and makes explanations traceable.

AI Agents and Multi-Step Reasoning at Enterprise Scale

If Thoughtworks focuses on representation, BCG formalizes execution through GenAI agents.

In BCG’s framework, an AI agent is an LLM equipped with:

- a specific role,

- access to structured information,

- reasoning capabilities,

- and the ability to iterate.

More powerful still is a multi-agent system, where specialized agents collaborate in sequence: analysis, validation, summarization, refinement.

BCG demonstrates this approach in large-scale modernization programs, including public-sector and banking systems with millions of lines of COBOL or PL/SQL code. In one case, GenAI agents processed over 3 million lines of code in days, extracting thousands of business rules and producing complexity scores that would traditionally take years.

Key agents include:

- Code analysis agents

- Dependency graph agents

- Business-rule extraction agents

- Summarization and editorial agents

This architecture enables hyper-transparent discovery, making unknowns visible early enough to influence design decisions.

Reference:

https://www.bcg.com/x/the-multiplier/how-gen-ai-rewriting-legacy-tech-modernization-rules

GenAI Across the SDLC, Not Just in the IDE

EY extends the conversation across the full Software Development Lifecycle.

Their work emphasizes GenAI as a force multiplier, not an autonomous actor. Practical applications include:

- Automated documentation for undocumented COBOL systems

- Assisted translation from legacy languages to modern stacks (PL/SQL to Spark SQL, SAS to PySpark)

- Test generation and code review

- Infrastructure-as-Code and pipeline generation

- Security and compliance checks

One important insight from EY is organizational:

GenAI compresses the experience curve. Junior engineers gain context faster. Senior engineers reason at higher abstraction levels. SME dependency is reduced without eliminating human validation.

EY’s pilots report efficiency gains of 30–60% in real modernization initiatives, with controlled accuracy and human oversight.

Reference:

Capability-Driven Modernization, Not Just Cloud Migration

IBM Consulting takes a more architectural stance.

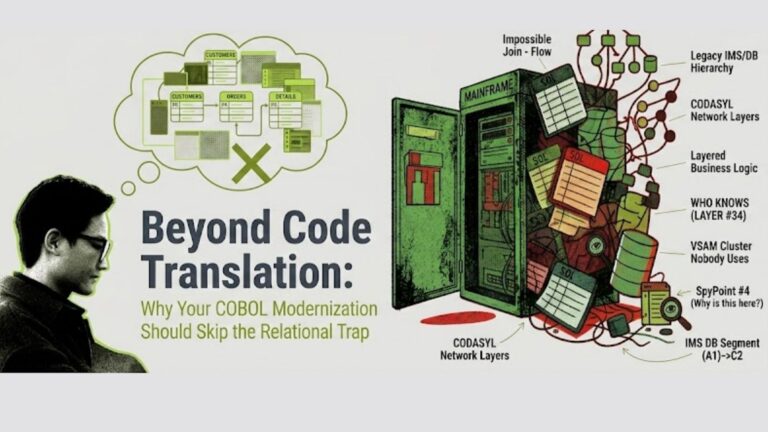

A recurring failure pattern in modernization is assuming that cloud migration equals transformation. In reality, moving applications without addressing capability duplication, misalignment, and organizational structure simply relocates complexity.

IBM positions GenAI as an accelerator for capability-driven modernization, enabling:

- Mapping business capabilities to applications, APIs, and data

- Detecting duplicated functionality across domains

- Supporting domain-driven decomposition

- Generating target architectures, APIs, and security patterns aligned with enterprise standards

IBM’s examples include GenAI-assisted API discovery mapped to industry models such as BIAN, helping large banks rationalize portfolios with tens of thousands of APIs.

Reference:

https://www.ibm.com/think/topics/generative-ai-for-application-modernization

End-to-End Acceleration: From Assessment to Execution

Xebia completes the picture with an end-to-end, GenAI-enhanced modernization framework.

Their approach applies GenAI to:

- Initial system investigation and dependency detection

- Automated documentation generation

- Technical debt identification

- Modernization strategy recommendations

- Code foundation generation

- Assisted refactoring and migration

Rather than focusing on one phase, Xebia demonstrates how AI can remove friction across the entire journey, delivering 20–30% execution gains in real projects.

Crucially, their work reinforces a shared conclusion: GenAI adds the most value early, during discovery, planning, and design—where uncertainty is highest and mistakes are most expensive.

Reference:

https://xebia.com/articles/legacy-modernization-in-the-age-of-genai-enhancing-efficiency-and-speed

Real Tools and Platforms Enabling This Shift

This transformation is not theoretical. It is supported by a growing ecosystem of production-grade tools and platforms, each playing a precise architectural role:

- Graph databases: Neo4j, Amazon Neptune Used to represent code structure, dependencies, control flow, and capability relationships as traversable knowledge graphs.

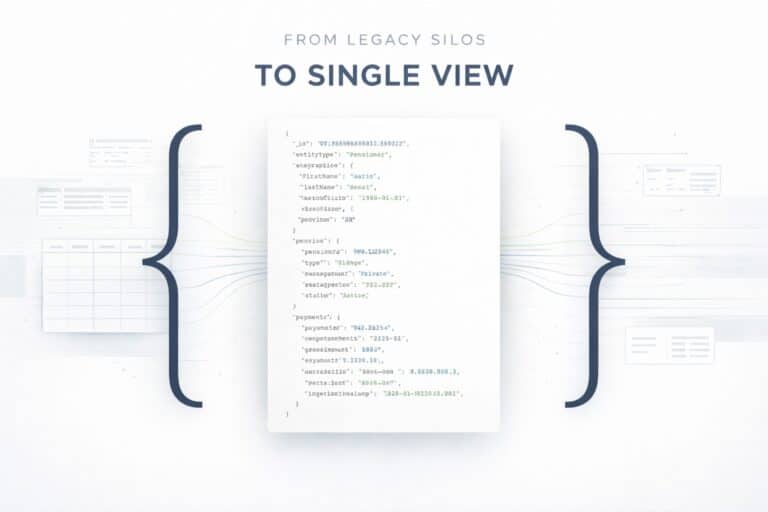

- Operational + semantic data platforms: MongoDB (Atlas) Used as the system of record for modernization intelligence:

- unified storage of source code metadata, AST outputs, documentation, business rules

- flexible modeling of evolving schemas during discovery and refactoring

- vector search for semantic retrieval over code, documents, APIs, and capabilities

- event-driven patterns and change streams to support continuous modernization MongoDB sits where structure, evolution, and scale must coexist, which is exactly the problem space of legacy modernization.

- LLM platforms: OpenAI GPT-4/4.1, Azure OpenAI, Anthropic Claude Used for reasoning, summarization, explanation, translation, and hypothesis generation, always grounded in retrieved context.

- RAG frameworks: LangChain, LlamaIndex, Haystack Orchestrate retrieval across document stores, vector indexes, graphs, and operational databases.

- Agent frameworks: AutoGen, CrewAI, Semantic Kernel Enable multi-step, role-based reasoning workflows (analysis, validation, summarization, refinement).

- Static analysis tools: SonarQube, IntelliJ inspections Provide deterministic signals on code quality, complexity, and dead code, feeding structured inputs into AI pipelines.

- Observability platforms: New Relic, Datadog Supply runtime evidence to complement static analysis, crucial for understanding what is actually used.

- Modernization accelerators: OpenRewrite, IBM Cloud Accelerators Apply large-scale, rule-driven refactoring and transformation once understanding has been established.

The winning architectures combine deterministic analysis, structured representations, operational + semantic data platforms, and LLM reasoning.

Prompt-only approaches do not scale.

Copilots alone do not modernize systems.

Data foundations do.

If GenAI is the reasoning layer, MongoDB is where modernization knowledge lives, evolves, and stays queryable over time.

The Real Shift: From Software Factories to Software Cognition

Across all five sources, a single conclusion emerges.

The future of software development is not about producing more code faster.

It is about making existing systems intelligible, governable, and evolvable.

GenAI’s deepest impact is cognitive:

- Code becomes explorable knowledge.

- Architecture becomes visible.

- Legacy systems become understandable again.

- Modernization becomes incremental, not traumatic.

This is why the most advanced solutions rely on agents, graphs, and orchestration, not just copilots.

MongoDB AMP: An AI-Driven Approach to Modernization

At this point, a reasonable question emerges: why should a database company be a modernization partner?

The answer lies in a pattern that has repeated itself across countless modernization initiatives over the past decade. When transformation stalls, it is rarely because teams cannot rewrite code. It is because the data layer has become the hardest part to change. Decades of business logic embedded in schemas, stored procedures, triggers, and tightly coupled access patterns turn the database into the single largest blocker to evolution.

This is where MongoDB’s experience fundamentally differs from that of traditional tooling vendors. Through years of real-world migrations and modernization programs, MongoDB has seen that unlocking the data layer often removes the constraints that block the rest of the stack.

The MongoDB Application Modernization Platform (AMP) formalizes this learning into a full-stack, AI-driven modernization approach. AMP is not a single tool, but a structured methodology that combines three elements: experienced modernization practitioners, proprietary analysis and transformation tooling, and agentic AI workflows. Together, these elements enable legacy applications to be transformed incrementally, safely, and at scale.

At the core of AMP is a test-first philosophy. Before any transformation occurs, legacy systems are instrumented with comprehensive test coverage that captures real production behavior. This creates a behavioral baseline that acts as a safety net throughout the modernization journey. Every transformation step is validated against this baseline, ensuring that modernized services behave identically to the systems they replace. Modernization becomes a controlled, verifiable process rather than a leap of faith.

AMP begins with deep system analysis. Proprietary tooling decomposes complex legacy applications into smaller, testable components, uncovering hidden dependencies and embedded logic that typically remain invisible until late in a project. This analysis informs sequencing and planning, allowing teams to modernize incrementally instead of attempting risky “big bang” rewrites. Each step reduces overall system risk rather than accumulating it.

Agentic AI acts as a force multiplier on top of this foundation. Analysis outputs feed directly into AI-driven workflows that can generate additional test cases, transform code components, and even assist in redesigning the data layer for MongoDB. Tasks that once required weeks of manual effort can now be completed in hours, while maintaining rigorous validation standards. Customers have reported code transformation speeds improved by an order of magnitude, with overall modernization timelines reduced by two to three times.

Crucially, AMP does not treat AI as an autonomous actor. The guardrails built through years of customer engagements ensure that AI accelerates proven processes rather than bypassing them. AI-generated transformations are continuously validated, repaired, and verified against legacy behavior. This combination of deterministic analysis, automated testing, and AI-assisted execution is what allows AMP to scale modernization safely.

The result is a model where legacy systems are no longer static liabilities. They become systems that can be understood, decomposed, and evolved continuously, without disrupting the business functions they support. In this sense, MongoDB AMP fits naturally into the broader shift described throughout this article: from modernization as a one-time, high-risk event to modernization as an ongoing, governed capability.

Conclusion

AI tools and agents are not replacing developers.

They are changing where human intelligence is applied.

Less effort spent deciphering the past.

More effort spent designing the future.

For organizations burdened by decades of legacy software, this is not a productivity gain. It is a strategic unlock.

Modernization stops being a once-in-a-generation gamble and becomes a continuous, governed process.

And that is how software starts evolving again.