MongoDB Data Modeling: The Shift to an Application-First Mindset

Most data models don’t break in development.

When considering MongoDB Data Modeling, it’s crucial to adapt your approach based on application requirements.

They break under real workload pressure.

This is especially true for MongoDB Data Modeling, where performance can be directly influenced by data design.

Modern systems do not fail because of technology.

They fail because of bad data models.

For decades, relational thinking shaped how engineers approached persistence. Normalize everything. Remove duplication. Join later. It worked well in a world of megabytes, batch jobs, and predictable workloads.

That world is gone. Today we design for:

• millions of users

• petabytes of data

• real-time APIs

Therefore, MongoDB Data Modeling should be integral to your development strategy.

• AI-driven workloads

Understanding MongoDB Data Modeling principles is essential for successful implementation.

In the context of MongoDB Data Modeling, it’s important to evaluate how data will be queried.

As highlighted in the methodology deck, application scale has moved from megabytes in the 1970s to petabyte and exabyte ranges today.

In this environment, data modeling must evolve. In this context, MongoDB Data Modeling: The Shift to an Application-First Mindset becomes essential for modern architectures

This article shows:

• the real modeling process for MongoDB

• how to answer the classic NoSQL objections

• where duplication is smart, not dangerous

Begin with the MongoDB Data Modeling approach that emphasizes workloads instead of tables.

• why the document model often wins in modern systems

No marketing fluff. Just architecture.

The Mindset Shift: From Storage-First to Application-First

Traditional modeling starts from tables – MongoDB modeling starts from workloads.

The key principle is simple:

Data that is used together should live together.

Focusing on MongoDB Data Modeling allows for optimization tailored to specific needs.

This idea appears explicitly in the methodology: the document model changes data modeling through embedding, referencing, and flexible schema design.

Relational thinking optimizes for storage elegance.

Document thinking optimizes for runtime behavior.

In distributed systems, runtime wins.

Step 1. Start From Requirements, Not Tables

Before touching any schema, ask:

• What are the main queries?

• What is the read/write ratio?

• What latency is required?

• What is the data growth curve?

The methodology emphasizes identifying the project type based on:

• lots of writes

• lots of reads

• low-latency reads

• massive data volume

• simplicity requirements

This is not academic. It directly drives your model.

Example:

If you have 40 million product reads per day, optimizing reads becomes mandatory.

Normalization alone will not save you.

Step 2. Classify Your Entities

One of the most underrated steps.

In MongoDB modeling, entities fall into clear categories:

Strong entities

These are the objects your queries return. They are prime collection candidates.

Lookup entities

Small, slow-changing reference data. Often duplicated safely.

By understanding MongoDB Data Modeling, you can enhance system performance.

Weak entities

Objects that do not exist alone. Usually embedded.

Associative entities

In relational systems these break many-to-many relationships. In MongoDB they are often unnecessary.

This classification already hints at where embedding will shine.

Step 3. Understand Relationships the MongoDB Way

Here is where most relational veterans get nervous.

The eternal question appears:

“Should I embed or reference?”

The methodology provides a decision framework based on real questions:

Identifying when to embed is a key aspect of MongoDB Data Modeling.

Applying MongoDB Data Modeling principles can simplify complex data interactions.

• Are the data queried together?

• Are they updated together?

• Is cardinality bounded?

• Will the document grow without limit?

• Can the child exist independently?

This is engineering, not dogma. This is exactly the philosophy behind MongoDB Data Modeling: The Shift to an Application-First Mindset.

When to Embed

Embed when:

• data is read together

• cardinality is bounded

• child has no independent life

• updates happen together

• low latency is critical

Classic example:

Customer with address.

Embedding removes joins and simplifies the model.

When to Reference

Reference when:

• cardinality is very high

• child entities are shared

• updates happen independently

• document size would explode

• data has its own lifecycle

Example:

Orders referencing customers.

Even the methodology shows different outcomes for Customer–Address versus Orders–Customers relationships.

The Big Objection 1: “But What About Joins?”

This is the first reflex from relational purists.

Reality check.

MongoDB does support joins through aggregation lookup. But the real question is different:

Should you need them for your hot path?

The Extended Reference Pattern exists precisely because too many joins in read operations hurt performance.

The solution is pragmatic:

Copy the few fields you need for the most common reads.

Not everything. Only what matters.

This is surgical denormalization.

The Big Objection 2: “Data Duplication Is Dangerous”

This is the most emotional objection. It is also often misunderstood. Duplication is not binary. It has types.

The methodology identifies four categories:

Immutable data

Never changes. Perfect candidate for duplication:

Temporal data

Value at a specific time matters more than the latest value:

Very sensitive to staleness

Requires coordinated updates.

Not very sensitive to staleness

Can be refreshed periodically.

This classification is pure gold in real projects.

Why Smart Duplication Wins

The deck is explicit about the tradeoff.

Benefits:

• improved performance

• better scalability

• cost reduction

In summary, mastering MongoDB Data Modeling is fundamental for modern applications.

• resilience

• predictability

Risks:

• possible inconsistency

• operational overhead

• business risks if misused

In high-scale systems, performance and predictability often dominate.

The real skill is controlled duplication, not blind normalization.

Step 4. Use Patterns Only When Needed

Another mature principle. Schema design patterns are powerful, but they are not decoration.

The guidance is clear:

Only use schema design patterns if needed. Schemas do not have to be complex.

This is where senior architects distinguish themselves.

Example: The Computed Pattern

Problem:

Expensive computation repeated many times.

Solution:

Precompute and store the result.

Benefits:

• faster reads

• lower CPU usage

Tradeoff:

Possible staleness.

In read-heavy platforms, this pattern is often decisive.

Example: The Extended Reference Pattern

Ultimately, effective MongoDB Data Modeling leads to better data handling in high-load scenarios.

Problem:

Too many joins.

Solution:

Embed only the frequently accessed fields.

Benefits:

• faster reads

• fewer lookups

Tradeoff:

Controlled duplication.

This pattern alone resolves many NoSQL debates.

Application-first data modeling in MongoDB: embedding, referencing, and controlled duplication in practice.

Step 5. Validate With Telemetry, Not Opinions

Architecture debates are cheap.

Metrics are truth.

The methodology recommends:

• using explain plans

• ensuring index usage

• collecting API telemetry

• monitoring latency percentiles

A powerful rule emerges:

If latency is above target, optimize for performance.

If latency is below target, optimize for simplicity.

That is production wisdom.

Why the Document Model Wins in Modern Systems

Let’s be precise.

MongoDB is not universally better.

But in modern workloads it often provides structural advantages:

Fewer joins on hot paths: This reduces latency variability.

Natural fit for hierarchical data_ JSON maps directly to documents.

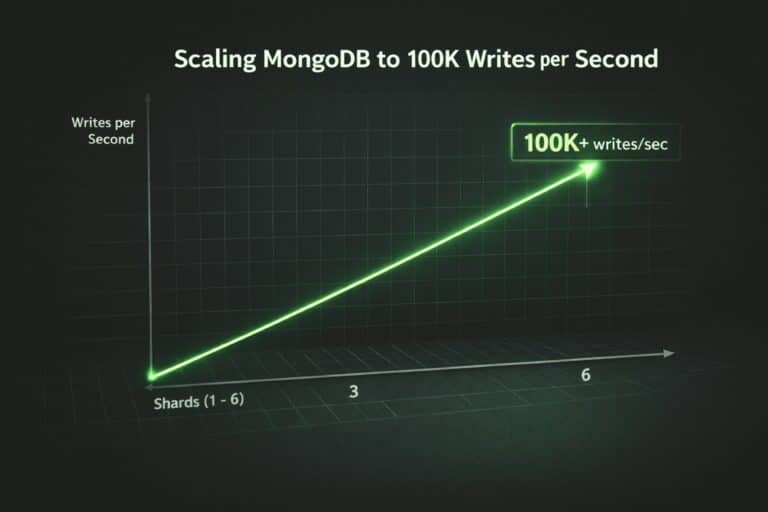

Better horizontal scalability: Because related data can be co-located.

Faster feature evolution: Thanks to flexible schema and versioning.

The methodology itself shows how the document model changes the relationship between the application and the data model, effectively flipping the traditional approach.

To wrap up, MongoDB Data Modeling: The Shift to an Application-First Mindset is crucial for any developer.

In distributed architectures, that inversion is powerful.

The Real Tradeoff: Elegance vs Throughput

Relational design optimizes for theoretical purity.

Document design optimizes for system behavior under load.

In small systems, both work.

At scale, physics enters the room.

Network hops matter.

Join fan-out matters.

Hot paths matter.

This is why modern high-volume platforms increasingly adopt document patterns even when relational systems remain in the stack.

Ultimately, MongoDB Data Modeling: The Shift to an Application-First Mindset is about aligning data structures with real system behavior.

Final Takeaway

Good MongoDB modeling is not about embedding everything.

It is about:

• understanding workload first

• classifying entities correctly

• embedding with discipline

• duplicating with intent

• measuring continuously

When done properly, the document model does not create chaos.

It creates predictable, scalable systems that match how modern applications actually behave.

And that is the real goal of MongoDB Data Modeling: The Shift to an Application-First Mindset in 2026.