Forget the double migration. Use AI-driven semantic analysis to leap directly from Mainframe to document-oriented architectures.

EMEA PUBLIC SECTOR SERIES

This article is part of the EMEA Public Sector series, exploring why data sovereignty has become a critical priority for governments and public institutions across Europe, the Middle East, and Africa. As regulatory pressure, geopolitical risk, and AI adoption reshape digital strategies, data sovereignty is emerging as a key pillar of modern public sector data platform architectures, enabling control, resilience, and trusted digital services.

For decades, COBOL modernization followed a cautious two-step choreography. First, move the data from the Mainframe into a relational database. Second, refactor the application into modern services, embracing the principles of mainframe modernization. This transformation is essential in the context of mainframe modernization.

It looks prudent. It feels safe. It is often structurally misaligned with how the original systems actually work.

Embracing mainframe modernization is crucial for businesses aiming to stay competitive in the digital age. The strategies of mainframe modernization allow organizations to leverage cloud solutions effectively.

A focus on mainframe modernization helps to bridge the gap between legacy systems and modern applications, ensuring a smoother transition and better performance.

With advancements in technology, mainframe modernization is not just about migration; it’s also about transforming the way businesses operate.

This relational detour introduces an artificial layer between legacy business logic and modern cloud-native architectures. The result is predictable: join-heavy access patterns, fragile performance under concurrency, and in many cases a second migration that nobody planned or funded.

Mainframe modernization not only addresses technical debt but also enables companies to innovate and respond to changing market conditions.

What has changed recently is not simply better code generation. The real shift is the emergence of AI systems capable of semantic system comprehension at scale.

By adopting mainframe modernization, organizations can reduce costs while enhancing the security and reliability of their data management systems.

The path to mainframe modernization involves careful planning and execution, ensuring that all aspects of the legacy system are addressed.

As businesses pursue mainframe modernization, they must also consider how to integrate new technologies with existing infrastructure.

The benefits of mainframe modernization extend beyond just technical improvements; they also include enhanced customer experiences and faster time to market.

The Hidden Nature of COBOL Data

Ultimately, mainframe modernization is about enabling businesses to innovate and adapt in a rapidly changing landscape.

Investing in mainframe modernization can yield significant returns, making it a worthwhile endeavor for organizations of all sizes.

To maximize the effectiveness of mainframe modernization, organizations should adopt an agile approach to development and deployment.

One persistent misconception is that COBOL systems are naturally tabular because they are old.

They are not.

COBOL copybooks encode hierarchical business aggregates. They describe customers with nested policies, accounts with repeating movements, claims with structured subcomponents. In modern architectural language, they already resemble aggregates more than normalized entities.

With the right approach to mainframe modernization, organizations can maximize their return on investment and minimize risk.

When these structures are forced into third normal form, the system is not being modernized. It is being decomposed.

Organizations undergoing mainframe modernization can expect to see significant improvements in handling data and integrating with new technologies.

Mainframe modernization is essential to avoid further complications in legacy systems.

As we delve deeper into the realm of mainframe modernization, the importance of seamless integration with cloud technologies becomes clear.

Mainframe modernization is increasingly vital for businesses looking to stay competitive. It offers the opportunity to streamline processes and enhance efficiency.

This is where the impedance mismatch begins.

The journey of mainframe modernization can be complex, but the rewards are well worth the effort.

Where the Relational Path Starts to Fracture

The integration of mainframe modernization strategies with data analytics can lead to unprecedented insights and decision-making capabilities.

Investing in mainframe modernization ensures that organizations can efficiently manage their data while embracing the flexibility of cloud computing.

Across banking and public sector modernization programs, the same three pathologies appear.

First, the join tax.

A single business entity that once lived as one contiguous record on the Mainframe becomes fragmented across multiple tables. Every modern API call must reconstruct what used to be naturally co-located. CPU consumption increases, query plans become fragile, and caching becomes mandatory rather than optional.

The benefits of mainframe modernization extend beyond just technology upgrades. They also include improved business agility and better alignment with modern user demands.

Second, semantic erosion.

The role of AI in mainframe modernization cannot be overstated; it plays a crucial role in optimizing legacy systems.

During normalization workshops, subtle business meaning is often lost. Fields that were contextually grouped become technically separated. Downstream teams must reverse engineer intent from schema instead of inheriting it from structure.

Third, the double migration effect.

Many organizations that first land on an RDBMS later discover that digital channels, event streaming, and AI pipelines require flexible semi-structured access. The result is a second wave of transformation from SQL to NoSQL. Budget, timeline, and organizational patience all take another hit.

What AI Actually Unlocks in 2026

Much of the market noise focuses on AI that writes or translates COBOL. That is not the breakthrough that matters.

The real inflection point is semantic extraction at estate scale.

Modern frontier models can:

- parse thousands of copybooks

- correlate batch programs with online transactions

- identify true business aggregates

- detect dead or batch-only fields

- reconstruct fragmented domain concepts

Used correctly, AI stops being a translator and becomes an architectural discovery engine. This is the moment where the direct leap becomes not only possible, but often preferable.

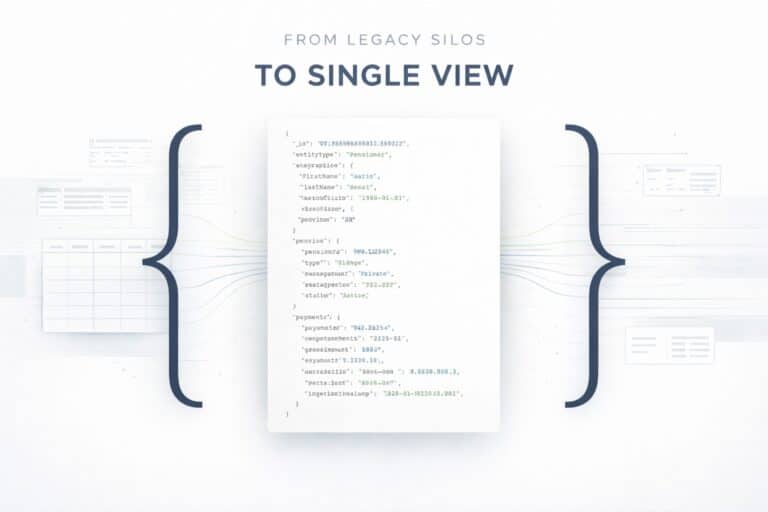

Example 1: From Copybook to Aggregate

Consider a realistic insurance pattern.

Legacy copybook:

01 POLICY-RECORD.

05 POLICY-ID PIC X(12).

05 POLICY-HOLDER PIC X(40).

05 COVERAGE-TABLE OCCURS 50 TIMES.

10 COVERAGE-TYPE PIC X(10).

10 COVERAGE-LIMIT PIC S9(9)V99.Relational interpretation

The typical outcome is predictable:

- POLICY table

- COVERAGE table

- foreign keys

- join on every policy view

- additional indexes to survive production load

Operational symptom: high read amplification for simple policy retrieval.

Direct document mapping

AI-assisted semantic extraction instead yields a natural aggregate:

{

"policyId": "PL-884422",

"policyHolder": "Maria Rossi",

"coverages": [

{

"type": "FIRE",

"limit": 250000

},

{

"type": "THEFT",

"limit": 50000

}

]

}The storage model now matches the access model. That alignment is the core architectural advantage.

Example 2: Separating Operational and Batch Fields

This is where AI adds disproportionate value.

In many estates, copybooks contain fields used only for end-of-day reconciliation, regulatory extracts, or archival reporting. Without semantic analysis, teams often migrate everything into the operational schema.

With AI-assisted classification, the operational document can remain lean:

{

"customerId": "C99127",

"name": "Luca Bianchi",

"accounts": [...]

}Batch-only attributes can instead flow into:

- cold collections

- data lake storage

- columnar warehouse

- or time-series platforms

This reduces document bloat and improves working set efficiency, especially under digital workloads.

Example 3: Access Pattern Alignment

Mainframe workloads were highly locality-aware. Many relational rewrites accidentally destroy that property.

Consider a common banking API pattern:

- fetch customer

- fetch last N movements

- compute balance snapshot

Relational path

Typical implementation involves:

- three or more joins

- heavy reliance on covering indexes

- sensitivity to cardinality changes

- increasing CPU under concurrency spikes

Document path

Single document read plus bounded array scan.

The difference becomes material at scale, especially under bursty digital traffic.

Benchmark Snapshot: Join Cost vs Document Read

To make the discussion concrete, consider a typical high-concurrency API workload.

Workload:

- getCustomerProfile(customerId)

- include last 20 transactions

- high concurrent traffic

Relational model

Query pattern:

SELECT c.*, a.*, t.*

FROM CUSTOMER c

JOIN ACCOUNT a ON a.customer_id = c.id

JOIN TRANSACTION t ON t.account_id = a.id

WHERE c.id = ?

ORDER BY t.date DESC

LIMIT 20;Typical behavior observed in the field:

- multiple index lookups

- join buffer pressure

- query plan sensitivity

- higher CPU under burst load

Document model

MongoDB query:

db.customers.findOne(

{ customerId: "C123" },

{ accounts: { $slice: -20 } }

)Typical behavior:

- single document read

- no joins

- predictable latency

- smaller working set

- more stable under concurrency bursts

The key point is not just raw speed. It is operational predictability.

Mini Case Study: European Public Sector Modernization

Consider a real pattern observed in large public sector modernization programs.

Initial situation

Legacy estate:

- Mainframe batch plus online

- highly hierarchical copybooks

- approximately 800 million active records

- unpredictable digital peaks

Initial strategy:

Mainframe to RDBMS to microservices.

Problems after the first migration

Within the first year:

- queries increasingly join-heavy

- significant growth in database CPU

- difficulty supporting new digital channels

- rigid schema evolution

The root cause was not the application code. It was the data model misalignment.

Course correction

The organization initiated:

- semantic analysis of copybooks

- business aggregate identification

- document remodelling

- introduction of a document-native platform

Observed outcomes

Typical results included:

- significant reduction in critical joins

- improved stability under burst workloads

- faster time to market for new APIs

- better alignment with AI and analytics pipelines

The real modernization happened during the second architectural shift, not the first.

Reference Architecture: Copybook to JSON Flow

Conceptual flow:

Sharding Strategy for Large Modernization Programs

This is where many otherwise solid programs fail.

When datasets reach hundreds of millions of entities, the document model must be designed as shard-aware from day one.

Principle 1: Shard key must follow access patterns

Successful mainframe modernization initiatives often require a cultural shift within organizations to embrace new methodologies.

Never derive the shard key directly from the copybook.

Instead analyze:

- dominant queries

- API access patterns

- traffic distribution

Example:

sh.shardCollection(

"bank.customers",

{ customerId: "hashed" }

)Principle 2: Avoid temporal hotspots

High-risk shard keys include:

- monotonically increasing IDs

- pure timestamps

- sequential keys

Prefer:

- hashed keys

- well-balanced compound keys

- time bucketing when necessary

Furthermore, mainframe modernization provides the foundation for digital transformation, enabling businesses to leverage emerging technologies.

Principle 3: Separate hot and cold data

An effective large-scale strategy includes:

- lean operational documents

- heavy historical data in separate collections

- tiered storage or TTL policies

This keeps the working set efficient and memory-resident where it matters.

Principle 4: Design for event and AI ecosystems

A 2026 modernization is not only about APIs.

It must support:

- change streams

- event-driven architectures

- feature engineering pipelines

- RAG and AI workloads

- near real-time analytics

The document model should be designed with this future in mind.

When the Direct Leap Is Not the Right Choice

Architectural credibility requires acknowledging boundaries.

The direct document leap may not be optimal when:

- workloads are heavily ad hoc relational analytics

- strict multi-row ACID across many entities dominates

- legacy SQL ecosystem lock-in is extreme

- organizational skills are purely relational

In these cases, a hybrid approach can be more pragmatic.

The key is conscious architecture, not ideology.

The Strategic Takeaway

COBOL was never the real problem. Misaligned data models were.

For the first time, we have AI systems capable of reading legacy estates semantically, not just syntactically. That fundamentally changes the economics of modernization.

Programs that still default automatically to relational normalization in 2026 risk rebuilding yesterday’s bottlenecks on tomorrow’s infrastructure.

The emerging winning path is increasingly clear:

from Mainframe

to semantic extraction

to document-native platforms

to AI-ready data foundations

Direct. Intent-preserving. Cloud-aligned.

Mainframe modernization’s critical role in enhancing customer experiences should not be underestimated as businesses strive to meet evolving expectations.

Ultimately, mainframe modernization is not just a technical endeavor; it is a strategic initiative that positions organizations for future growth.

Each organization’s path to mainframe modernization will differ based on their unique circumstances and goals.

Investing in mainframe modernization is essential for organizations that want to thrive in a digital-first world.

In conclusion, mainframe modernization is a transformative journey that organizations cannot afford to ignore if they wish to remain competitive.

In conclusion, the journey of mainframe modernization is essential for ensuring that companies remain competitive and innovative in the digital era.