From Proof of Concept to Operational Insight

During multiple on-site engagements with enterprise customers, I have repeatedly encountered the same challenge: traditional processes struggling to keep pace with modern operational complexity.

To address this gap, I built a series of targeted proof of concepts designed to demonstrate how computer vision and AI can transform well-known scenarios into intelligent, automated systems.

At the core of these experiments lies IBM Watson Visual Recognition, applied in a pragmatic, production-oriented way rather than as a theoretical exercise.

Use Cases Explored

The PoC focuses on applying visual recognition to scenarios that are common, measurable, and immediately valuable:

- Quality control

Automating visual inspection to reduce human error and accelerate validation processes. - Image classification

Structuring unlabelled visual data into meaningful, actionable categories. - Virtual receptionist

Enabling image-based interaction to support smart front-desk and access-control systems.

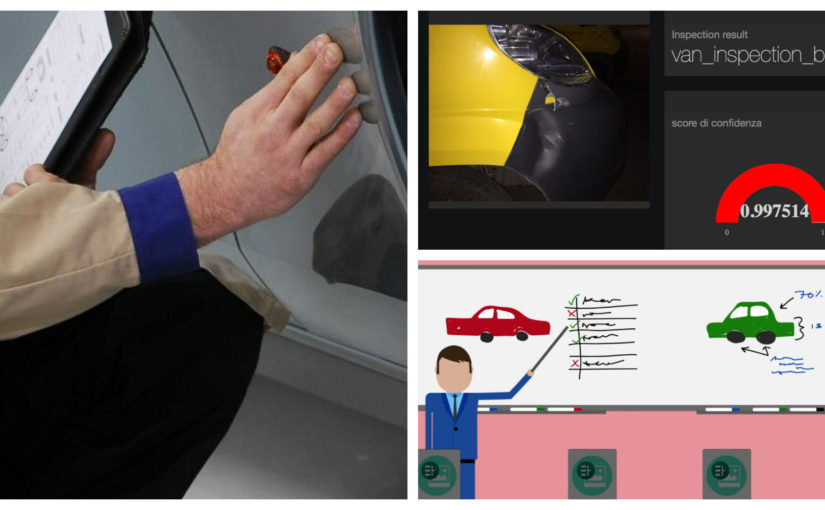

Fleet Damage Detection: A Concrete Example

One of the most interesting scenarios under exploration involves automated inspection of fleet vehicles.

When a van or truck returns to a company hub, a camera system can automatically analyze its external condition. Using visual recognition, the system can detect:

- Scratches

- Dents

- Bodywork anomalies

- Visible damage acquired during operation

In this initial proof of concept, the model was intentionally kept simple to validate feasibility and accuracy:

- Two classification classes only

- Damaged vehicle

- Undamaged vehicle

- The damage class was trained exclusively on scratches and dents, allowing the model to focus on high-frequency, high-impact defects.

Despite its simplicity, the results clearly demonstrate how AI-driven inspection can reduce manual checks, improve consistency, and create a reliable audit trail for fleet management.

Architecture of the Proof of Concept

The PoC was built using a lightweight, modular stack to emphasize speed of iteration and clarity:

- Node-RED for orchestration and flow logic

- Freeboard for the real-time dashboard and visualization layer

- Watson Visual Recognition APIs for image analysis and classification

This approach allowed rapid experimentation while remaining close to architectures that enterprises can realistically adopt.

Live Demonstration

A working demo of the proof of concept was deployed directly on my Bluemix environment, showcasing the full flow from image acquisition to classification and visualization.

This experiment confirms a broader insight: visual recognition is no longer an experimental technology. When applied correctly, it becomes a practical tool for modernizing legacy processes and unlocking new operational intelligence.