Microsoft Brainwave hardware platform for real-time AI

Today, internet giants like Google, Facebook, Microsoft, Amazon are exploring a wide range of chip technologies that can drive AI forward based on chip produced by Intel and nVidia.

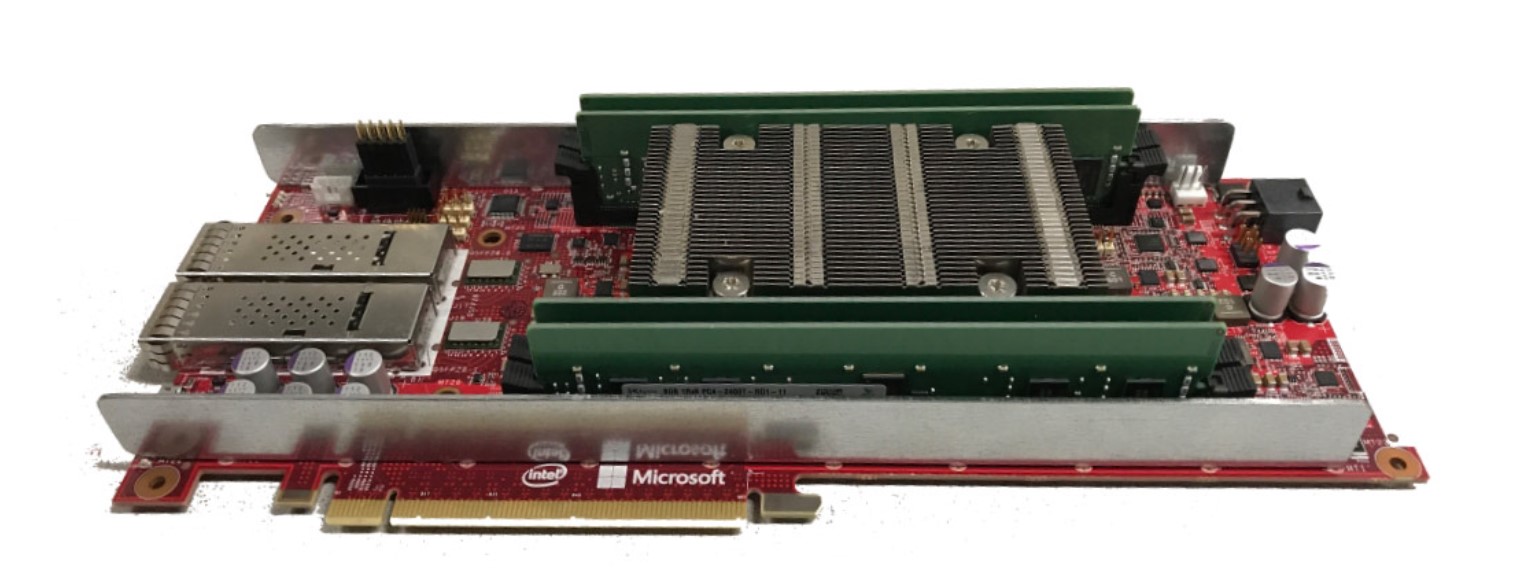

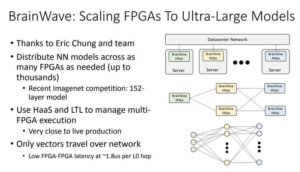

Microsoft announced Brainwave, Brainwave consists of a high-performance distristributed system architecture; a hardware deep-neural network engine running on customizable chips known as field-programmable gate arrays (FPGAs); and a compiler and runtime for deployment of trained models.

Algorithms are written into FPGAs, making them quite efficient and easily reprogrammable. This specialization makes them ideal for machine learning, specifically parallel computing. So it can synthesized DPU or DNN processing units into its FPGAs.

This is taking a slightly different approach than Google the builds a chip optimized for a very specific set of algorithms, so Google jumped into the complicated and very expensive business of building its own chips to give itself an edge in artificial intelligence. The chip, called the Tensor Processing Unit, is optimized to run its deep learning algorithms.

The FPGAs, built by Intel-owned Altera, give to the Microsoft’s Brainwave more flexibility than a dedicated chip and flexibility is important when the latest advancements in deep learning are emerging still at an almost monthly (or weekly) pace.

Also unlike Google, Microsoft will support multiple deep learning frameworks, including Microsoft’s CNTK, Google’s TensorFlow and Facebook’s Caffe2.